https://www.cnblogs.com/qi123/p/9061008.html

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 | |

1 2 3 4 5 6 7 8 9 | |

1 2 3 4 5 6 7 8 9 10 11 12 | |

1 2 3 | |

https://www.cnblogs.com/qi123/p/9061008.html

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 | |

1 2 3 4 5 6 7 8 9 | |

1 2 3 4 5 6 7 8 9 10 11 12 | |

1 2 3 | |

https://zhuanlan.zhihu.com/p/42403181

dmidecode 是一个读取电脑 DMI(桌面管理接口(Desktop Management Interface))表内容并且以人类可读的格式显示系统硬件信息的工具。(也有人说是读取 SMBIOS – 系统管理 BIOS(System Management BIOS))

这个表包含系统硬件组件的说明,也包含如序列号、制造商、发布日期以及 BIOS 修订版本号等其它有用的信息。

DMI 表不仅描述了当前的系统构成,还可以报告可能的升级信息(比如可以支持的最快的 CPU 或者最大的内存容量)。

这将有助于分析你的硬件兼容性,比如是否支持最新版本的程序。

1

| |

inxi 是 Linux 上查看硬件信息的一个灵巧的小工具,它提供了大量的选项来获取所有硬件信息,这是我在现有的其它 Linux 工具集里所没见到过的。它是从 locsmif 编写的古老的但至今看来都异常灵活的 infobash fork 出来的。

inxi 是一个可以快速显示系统硬件、CPU、驱动、Xorg、桌面、内核、GCC 版本、进程、内存使用以及大量其它有用信息的脚本,也可以用来做技术支持和调试工具。

1 2 3 | |

lshw (指硬件监听器(Hardware Lister))是一个小巧灵活的工具,可以生成如内存配置、固件版本、主板配置、CPU 版本和速度、缓存配置、USB、网卡、显卡、多媒体、打印机以及总线速度等机器中各种硬件组件的详细报告。

它通过读取 /proc 目录下各种文件的内容和 DMI 表来生成硬件信息。

lshw 必须以超级用户的权限运行来检测完整的硬件信息,否则它只汇报部分信息。lshw 里有一个叫做 class 的特殊选项,它可以以详细的模式显示特定的硬件信息。

1 2 3 4 5 6 7 8 9 10 | |

内核在 /sys 目录下的文件中公开了一些 DMI 信息。因此,我们可以通过如下方式运行 grep 命令来轻易地获取机器类型。

1

| |

或者,可以使用 cat 命令仅打印出特定的详细信息。

1 2 3 4 5 6 7 8 9 10 11 | |

dmesg 命令是在 Linux 上 syslogd 或 klogd 启动前用来记录内核消息(启动阶段的消息)的。它通过读取内核的环形缓冲区来获取数据。在排查问题或只是尝试获取系统硬件信息时,dmesg 非常有用。

1 2 | |

hwinfo(硬件信息(hardware information))是另一个很棒的工具,用于检测当前系统存的硬件,并以人类可读的方式显示各种硬件模块的详细信息。

它报告关于 CPU、内存、键盘、鼠标、显卡、声卡、存储、网络接口、磁盘、分区、BIOS 以及桥接器等信息。它可以比其它像 lshw、dmidecode 或 inxi 等工具显示更为详细的信息。

hwinfo 使用 libhd 库 libhd.so 来收集系统上的硬件信息。该工具是为 openSuse 特别设计的,后来其它发行版也将它包含在其官方仓库中。

1 2 3 | |

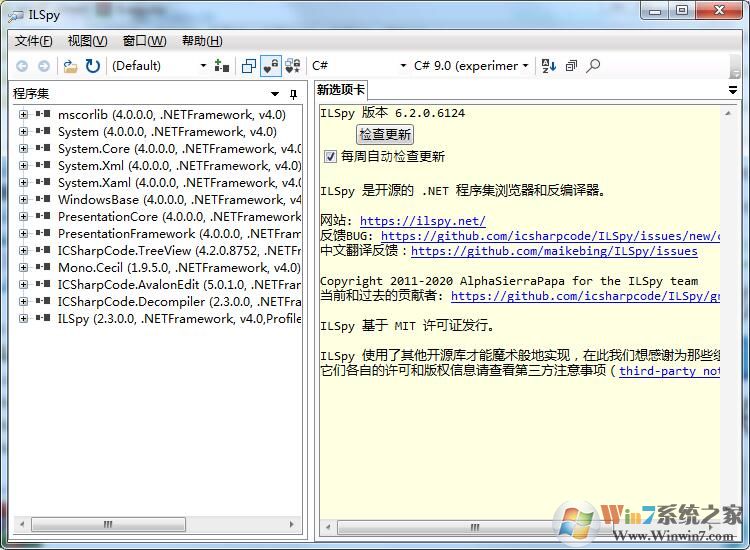

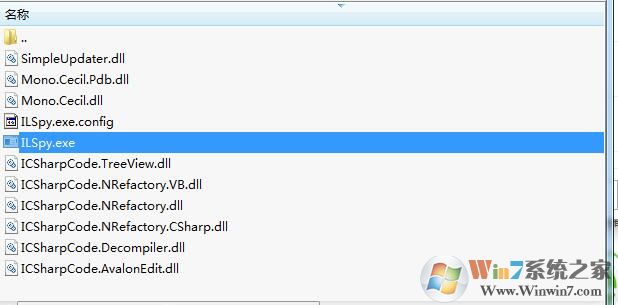

http://www.winwin7.com/soft/15515.html

ilspy.exe是知名的ilspy反编译工具的主程序,如果你需要对.NET程序集进行浏览器和反编译,那么它是非常不错的工具。小编给大家带来的是最新版本!稳定性和精确更好。